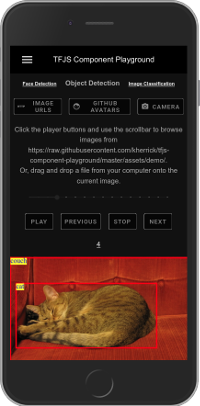

TensorFlow.js Component Playground

Sunday, 08 March 2020

After experimenting with the AIY Vision kit and the Coral USB Accelerator I decided to try “edge computing” from another angle by wrapping up TensorFlow.js with LitElement to make a few Web Components for testing. The tfjs-backend-wasm package is loaded to use WebAssembly for the backend and while it seems similar to WebGL for lite models, it performs worse when using medium-sized ones. Fortunately, they’re commited to supporting the platform and will continue to improve it.

The models are setup with little modification to the configuration, so watching what’s detected and how things are classified before diving into the actual building and changing of them is interesting. Google’s AutoML project might be something to check out next. Each component has methods exposed so the user can provide images or video to inference—for example:

The last thing of note is that all image classification, object, and facial detection is completed client-side. It’s by design, more private by not sending the data to the server—kind of neat.